Hey everyone and welcome to my first hashnode article about Kafka, In this article, I’m going to show u how to write your first app using Kafka and spring boot and I may show you how to save the data using the ELK stack later

What is KAFKA

In a short term, it’s an Open source project developed by Linkedin and donated later to Apache this project aims to process a big amount of incoming data in real-time with high-throughput and low-latency with fault tolerance in mind, or you can visit Wikipedia page for more info or you can visit Dzone.

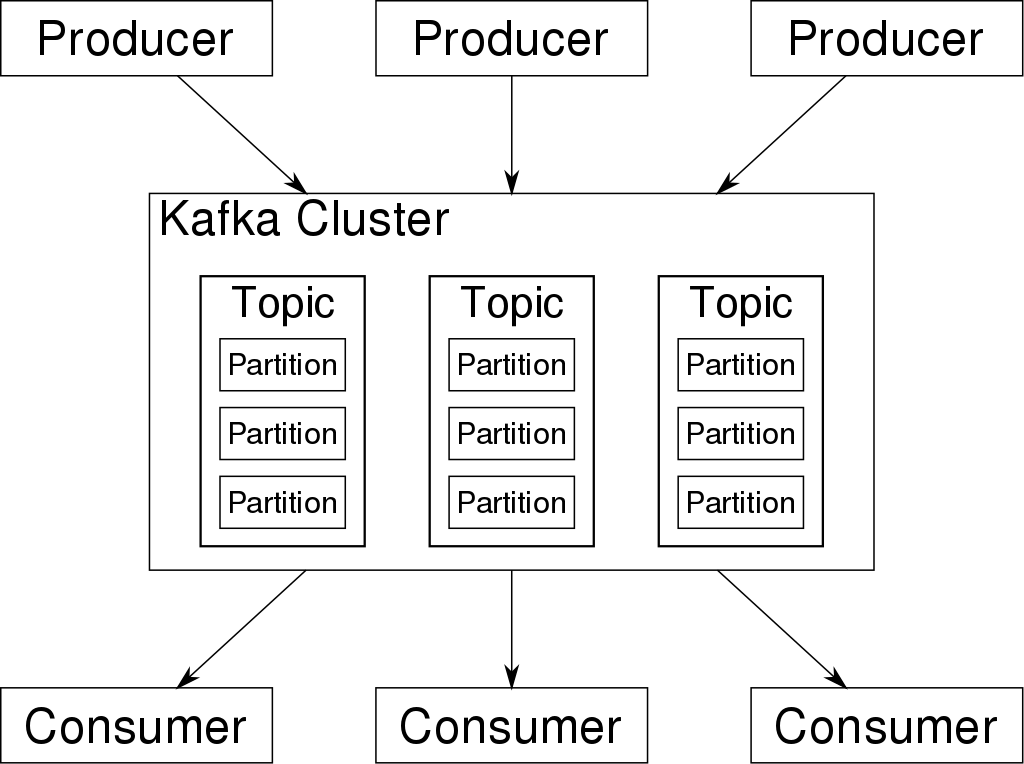

Architecture

As u can see in the image above there are three major components in Kafka.

The producer where all the messages sent from.

The consumer’s which consists to receive incoming data to process later on.

The Kafka cluster which has multiple brokers used to manage the persistence and replication of message data.

Setup Kafka

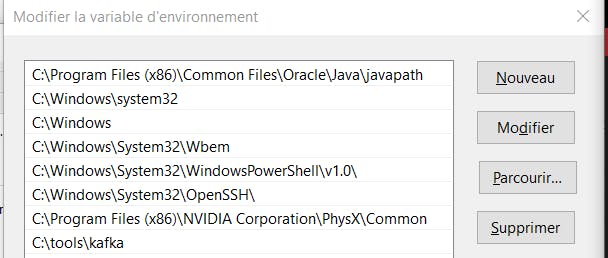

you need to have java installed in your machine and added to PATH variable wich it’s an easy task to do.

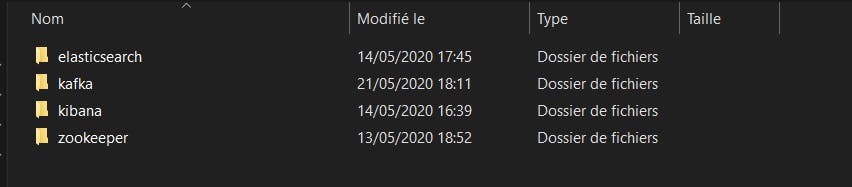

Download Kafka from apache website and extract the files in your C:/ or D:/ drive and add the source folder to your path like so

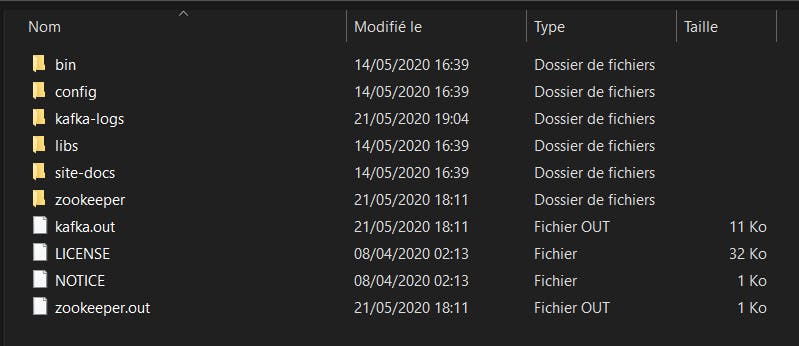

If you like working with cmd, congrats you can start working with it now by opening your cmd in where you extracted the Kafka folder

go to the bin folder and enjoy.

go to the bin folder and enjoy.if you love working with GUI I found two softwares

- First you should have a little bit of knowledge of Docker, you can follow this link it will guide you

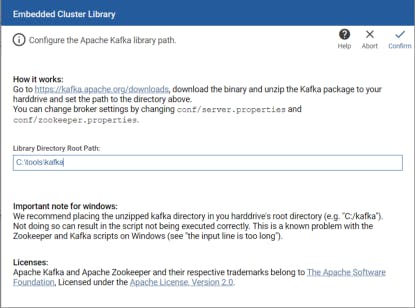

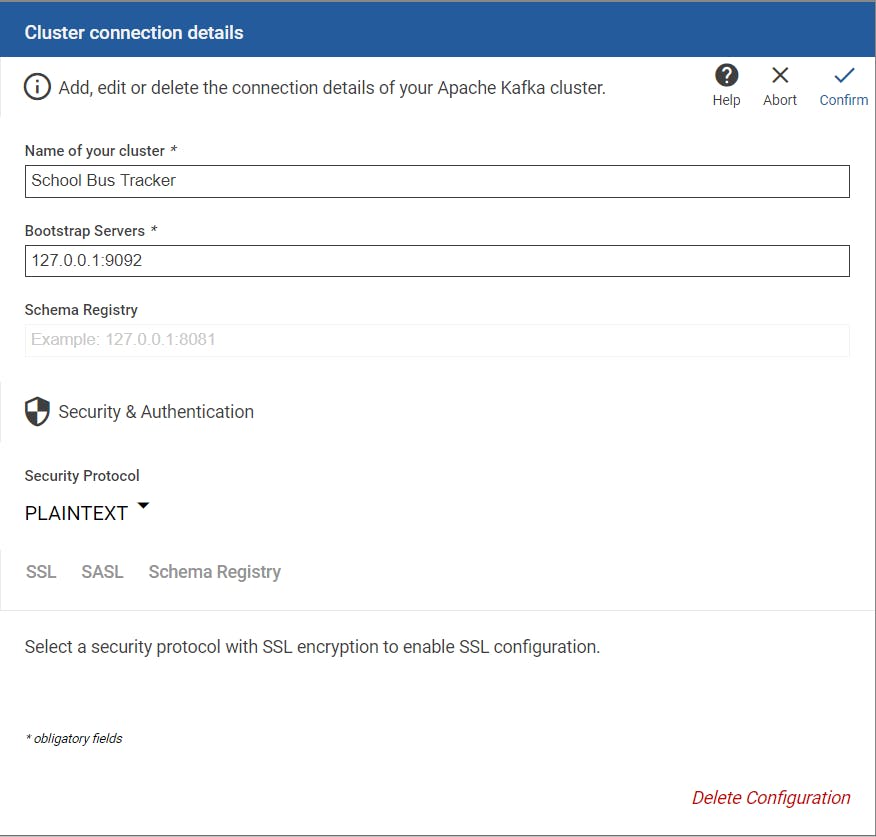

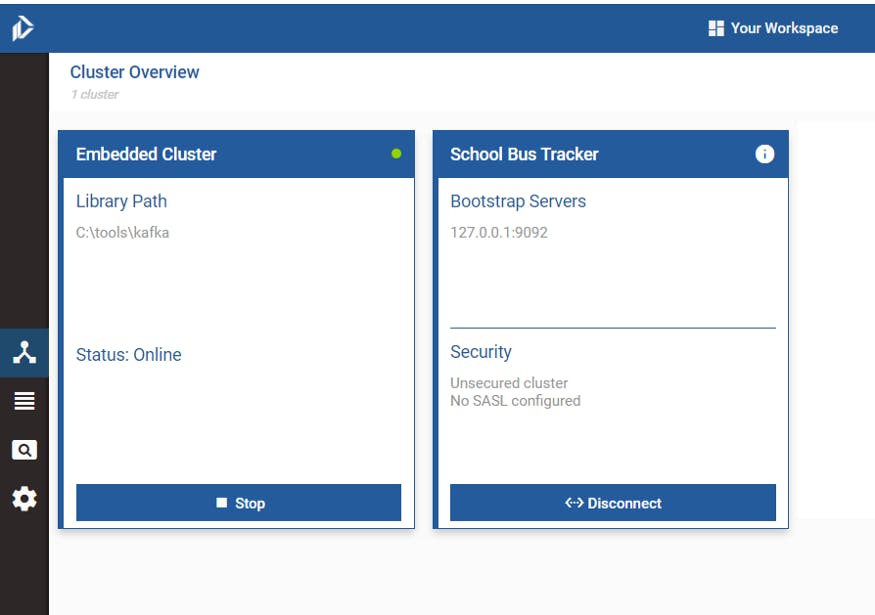

- Second you can download the app from here now you only need to fill up the embedded cluster input library with your local Kafka cluster and hit confirm after doing that create a cluster and run it and you good to go